As more workloads are managed by Snowflake, storage costs will continue to rise if stored in native proprietary format which leads many organizations to reach a critical point where stakeholders begin looking for other options. This is precisely when Snowflake Iceberg tables emerge as a strategic and cost-effective alternative.

Understanding what Snowflake Iceberg is and how it actually affects your compute and storage economics has become a priority for any data team running analytics at scale.

Open table formats offer real interoperability benefits and genuine cost advantages. But they also introduce tradeoffs that, if you ignore them, will offset every dollar you saved on storage.

Rather than profiting from increased compute consumption like some platform-native tools, Revefi focuses entirely on optimizing spend. It typically identifies an average 60% cost savings for modern data teams. Read on to learn how open table formats impact your cloud infrastructure costs.

Key takeaways

- Using Snowflake Iceberg tables allows you to query data stored externally in open formats while maintaining native data warehouse performance.

- Adopting open lakehouse architectures separates compute from storage, giving you better control over your raw cloud storage costs.

- Understanding specific Snowflake Iceberg limitations is vital to avoid unexpected query latency and hidden compute charges.

- The Revefi AI Agent, Raden, provides end-to-end visibility and automated anomaly detection to optimize your cloud data investments.

- Implementing automated lifecycle policies and strict metadata governance ensures continuous cost efficiency across your entire data ecosystem.

What is Snowflake Iceberg?

Understanding the fundamentals

Snowflake Iceberg is Snowflake's support for Apache Iceberg tables, which lets teams work with open-format data stored in cloud object storage as if it were managed inside Snowflake. It combines the structure and reliability of a modern table format with the scalability of a data lake, making it easier to query large datasets while supporting features like schema evolution, time travel, and transactional consistency.

How Snowflake Iceberg works

Snowflake Iceberg allows users to query and manage Iceberg tables in external cloud storage using standard SQL, without requiring all data to be converted into a proprietary Snowflake format. This gives engineering teams the flexibility of keeping data in open storage while still benefiting from Snowflake's performance, governance, and analytics capabilities. The result is a hybrid model that bridges data lakes and data warehouses in a more interoperable way.

Why Snowflake Iceberg is reshaping data cost management

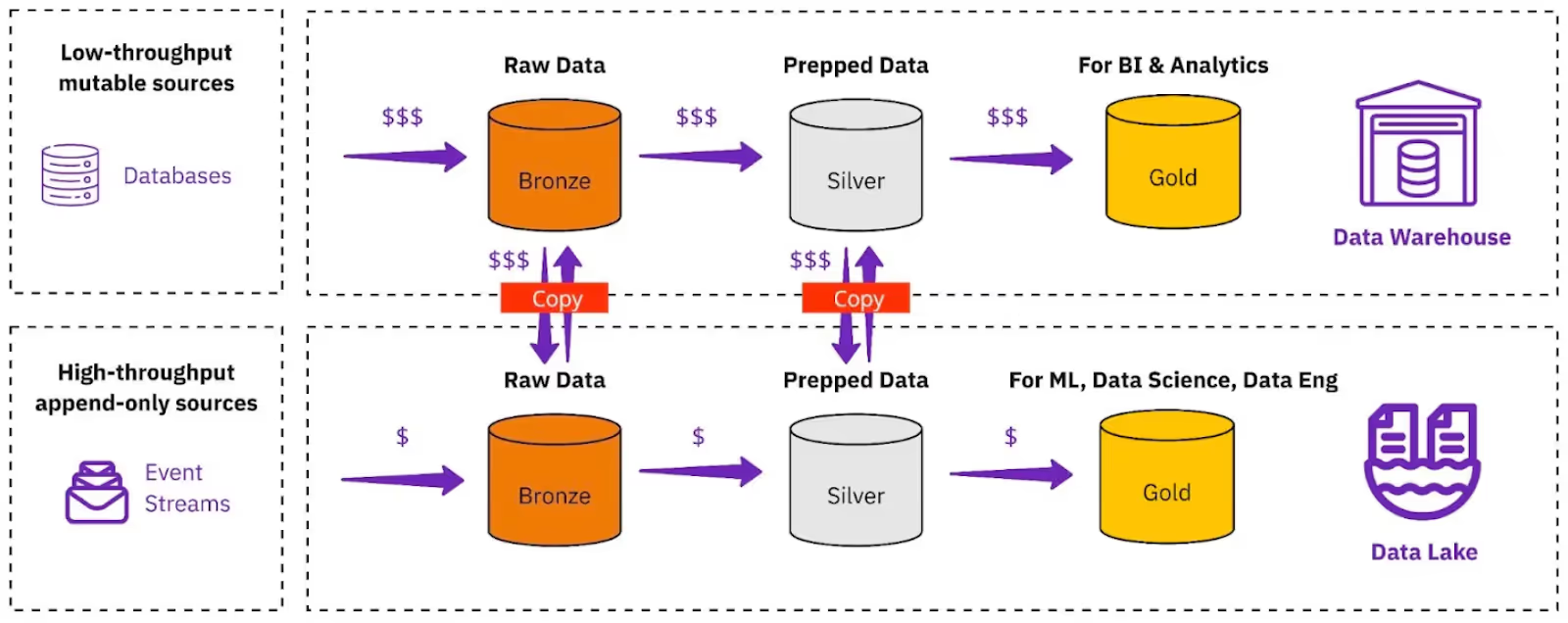

Separation of compute and storage economics

Traditional architectures lock your storage and compute costs into a single vendor ecosystem. By storing data externally, you separate these economics entirely, allowing you to negotiate raw storage pricing independently. This structural shift is a primary driver for cloud spend reduction across modern enterprises.

Open table formats and vendor flexibility

Relying on proprietary formats restricts your ability to migrate workloads or test new processing engines efficiently. Open table formats guarantee that your critical data remains fully accessible to multiple tools simultaneously. This vendor flexibility fosters competition based strictly on compute efficiency and price.

Cost visibility challenges teams face

While external storage is cheaper, managing the compute required to query it introduces new complexities. Without granular FinOps capabilities, data teams struggle to attribute query costs to specific users or departments accurately. Overcoming these visibility gaps is critical for achieving true automated Snowflake warehouse optimization.

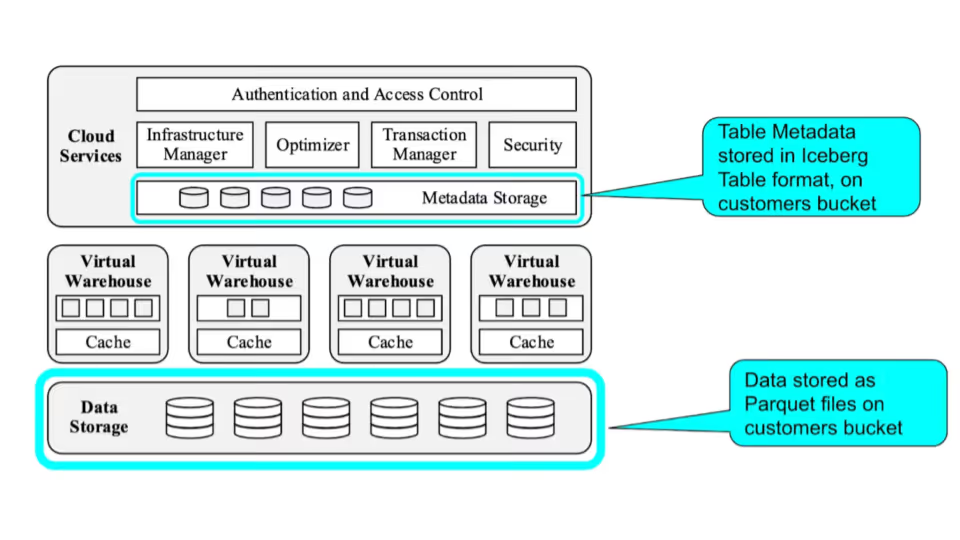

Core architecture behind Snowflake Iceberg tables

Catalog and metadata layers

The catalog serves as the definitive source of truth, tracking the current state of every table in your environment. Beneath the catalog, the metadata layer records the exact locations of data files and complex schema details. This hierarchy enables the processing engine to plan queries efficiently without scanning your entire data lake.

Manifest and snapshot management

A snapshot perfectly represents the complete state of a data table at a specific point in time. Manifest files list the individual physical data files associated with each snapshot, providing granular tracking for every transaction. Proper management of these manifests is essential to prevent metadata bloat.

Data file structure and optimization

The actual raw data resides in efficient columnar formats like Parquet or ORC within your cloud buckets. How you organize and compress these physical files directly dictates your query speed and overall processing compute. Consistently optimizing this file structure ensures your warehouse consumes fewer resources.

Snowflake native tables vs Iceberg tables: What is the difference?

Performance tradeoffs

Native tables benefit directly from optimized micro-partitioning and proprietary caching mechanisms built into the engine. While external tables perform well, they inherently introduce slight network latency during raw data retrieval. Your engineering team must weigh this performance tradeoff against the potential for storage cost savings.

Cost implications

Native storage incurs premium vendor pricing, whereas external data lake storage is commoditized and inexpensive. However, scanning poorly optimized external files can trigger a higher compute bill due to longer query execution times. Balancing these specific cost implications is the core challenge of cloud financial management.

Governance and interoperability

Native tables restrict data access solely to authorized users within the specific platform ecosystem. External tables offer universal interoperability, securely allowing separate tools like Apache Spark to read the exact same data simultaneously. This shared governance model eliminates expensive data duplication and complex pipelines.

Types of Snowflake Iceberg tables

Snowflake-managed Iceberg tables

In this integrated model, the warehouse acts as the official catalog and manages all metadata operations automatically. You gain the interoperability of open formats while retaining the automated maintenance features of a native environment. This option simplifies administration but keeps your metadata catalog tied to a single vendor.

Externally managed Iceberg tables

Here, an external catalog exclusively tracks your table metadata. The data warehouse only reads the information, offering you architectural portability. This method requires more manual engineering effort but delivers protection against platform lock-in.

Benefits of Snowflake Iceberg tables for modern data platforms

Open lakehouse interoperability

Building an open lakehouse natively allows your data scientists and business analysts to collaborate on a unified dataset. You eliminate the need to maintain separate storage silos for different departmental reporting tools. This seamless interoperability accelerates time to insight across your entire enterprise architecture.

Improved data sharing and portability

Sharing massive analytical datasets internally or externally becomes effortless when using a universally accepted open standard. You can safely grant access to third-party partners without forcing them to adopt your specific data warehouse vendor. This high level of portability was a major theme during recent Snowflake summit highlights.

Flexible storage optimization

You have the freedom to define exactly how your files are compressed, encrypted, and distributed across your cloud buckets. This granular level of control allows your data architects to design customized and inexpensive storage tiers. Flexible optimization ensures you only ever pay for the exact performance levels your specific workloads demand.

Performance considerations when using Snowflake Iceberg

Metadata overhead and query latency

Every single query requires the underlying engine to parse external metadata files before execution begins. If your table contains millions of small, unoptimized files, this metadata overhead causes noticeable reporting delays. Keeping your metadata layer clean and compact is mandatory for maintaining acceptable query latency.

Caching and file sizing strategies

Using localized caching temporarily stores frequently accessed external data directly on your active compute node. Combining smart caching protocols with optimal file sizing prevents the processing engine from constantly fetching data across the network. These strategies reduce your active processing time and lower overall compute spend.

Warehouse sizing impact

Querying massive external datasets often requires deploying larger compute clusters to handle network input demands. If your open tables are poorly partitioned, you will be forced to scale up continuously just to maintain baseline performance. Careful warehouse sizing actively prevents unnecessary compute over-provisioning.

Snowflake Iceberg limitations teams must understand

Cross-region and multi-cloud constraints

While the open format implies total freedom, physically moving data across different cloud regions still incurs egress fees. Your analytics engine must reside in the exact same region as your storage bucket to achieve optimal performance. Failing to recognize these geographic constraints leads to unexpected networking charges.

Metadata refresh complexity

Externally managed tables routinely require your data engineers to manually refresh the metadata so the engine recognizes newly ingested data. If this critical refresh process fails, your business users will actively query stale information. Managing this synchronization systematically adds operational complexity to your workflows.

Write and mutation limitations

Executing frequent row-level updates or deletes on external files is significantly slower than modifying traditional native tables. The system must completely rewrite entire underlying files to process even a single minor data mutation. Understanding these write-heavy Snowflake Iceberg limitations helps you design more efficient, append-only data pipelines.

Hidden cost risks

Because raw storage is incredibly cheap, modern teams often hoard useless data indefinitely, leading to out-of-control scanning costs. Every unoptimized query executed against a massive external table burns through expensive compute credits rapidly. Without strict governance, these hidden risks can negate the financial benefits of an open lakehouse architecture.

Best practices to optimize Snowflake Iceberg cost and performance

File compaction and snapshot management

Regularly compact your small data files directly into larger, optimized blocks to accelerate query read times. Expire old snapshots systematically to prevent your cloud storage buckets from filling up with obsolete data. Routine technical maintenance keeps your environment lean and performant over time.

Partition strategy optimization

Design your partitions strictly based on the exact columns your business users filter by most frequently during daily operations. Intelligent partitioning allows the processing engine to skip irrelevant files entirely during query execution. This single architectural practice drastically reduces the amount of data scanned and lowers your compute spend.

Storage lifecycle management

Implement automated cloud storage policies to transition older, less frequently accessed data to cheaper archive tiers. By proactively shifting historical records to cold storage, you minimize your baseline infrastructure storage costs. Lifecycle management remains a fundamental component of proactive cloud financial operations.

Monitoring unused compute and storage

Deploy continuous observability tools to track exactly which tables and compute clusters sit idle. Shutting down unused compute resources and dropping abandoned external tables prevents active budget leakage. Constant operational vigilance ensures your data infrastructure operates at maximum financial efficiency.

How Revefi helps control Snowflake Iceberg cost and usage

Revefi provides a comprehensive platform for teams looking to optimize their data cost, performance, and quality. Serving as the AI agent for Snowflake Cost Optimization, Revefi fundamentally changes how modern data teams approach their data infrastructure usage.

Detecting inefficient Iceberg workloads

Raden, the AI agent powering Revefi, analyzes your query history natively to identify workloads struggling with external data retrieval. The system pinpoints poor partitioning, missing filters, and unoptimized file structures automatically. You receive actionable insights that directly drive spend reduction without requiring manual human effort.

Identifying hidden storage and compute waste

The platform maps your complete data ecosystem to uncover abandoned external tables and idle compute clusters. By accurately highlighting resource fragmentation, Revefi enables your FinOps leaders to eliminate unnecessary cloud infrastructure costs. This automated oversight ensures you maintain total cost governance continuously.

Automating FinOps visibility across open table formats

Revefi bridges the visibility gap by attributing exact compute costs to specific open table queries, users, and departments. You gain end-to-end visibility into your complex hybrid architecture natively and securely. With automated dynamic scaling and anomaly detection, your team has the tools needed to achieve sustained cost efficiency.

.avif)