Data Cost and Observability for Financial Services and Banking

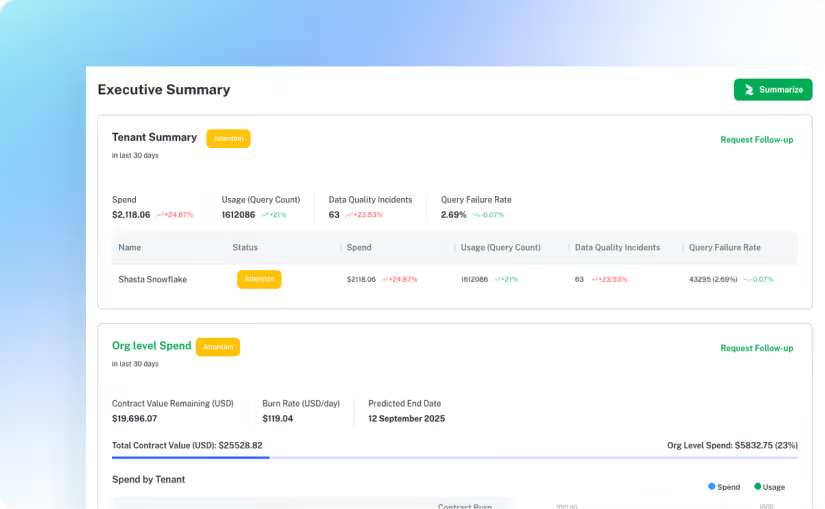

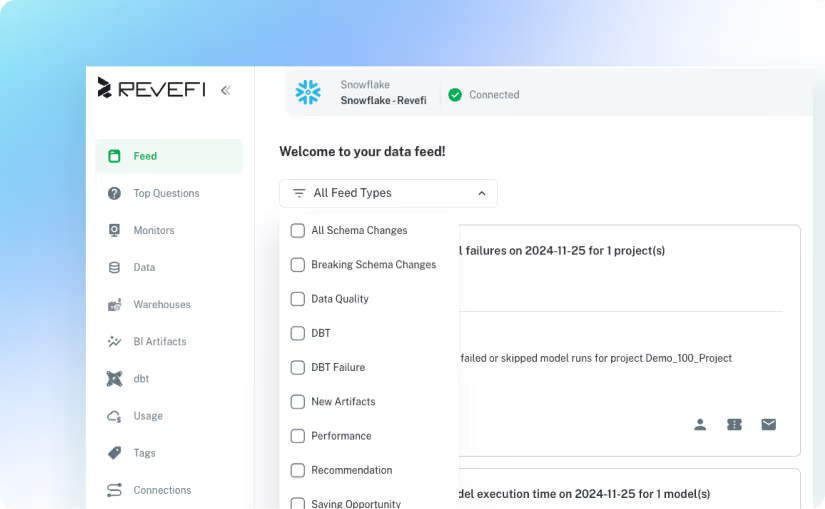

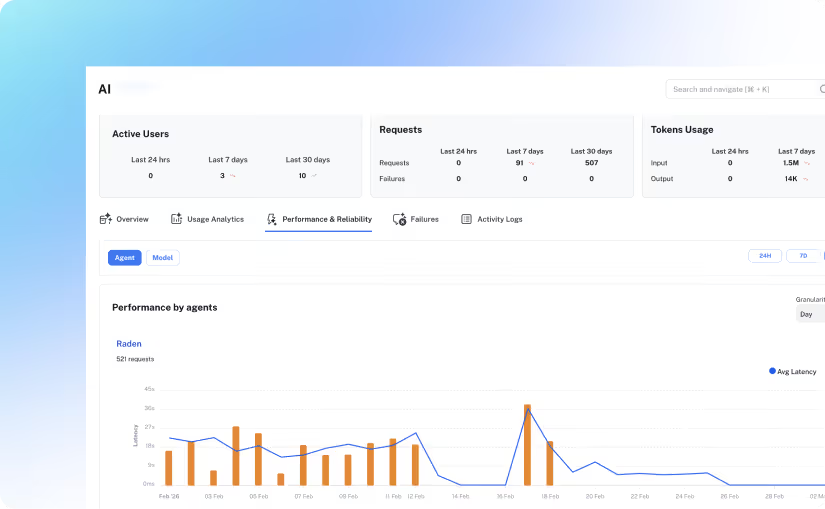

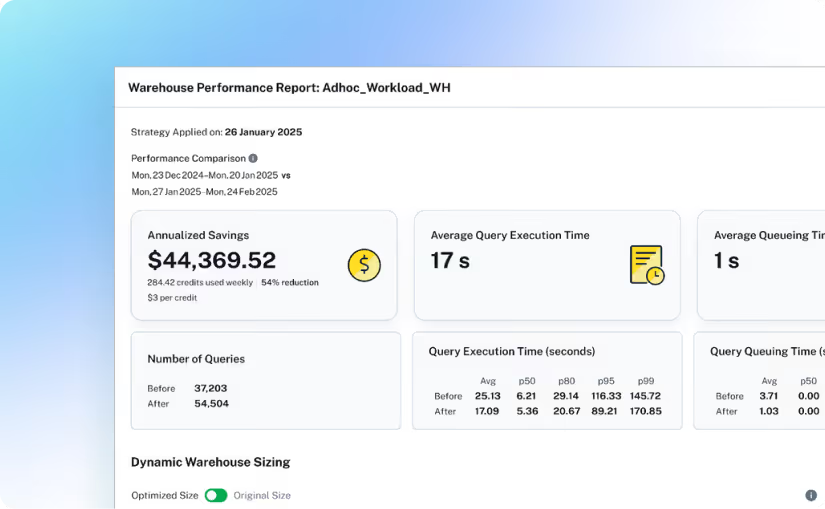

Revefi optimizes financial services data workloads with

continuous visibility and control over cost, data quality, and performance.

continuous visibility and control over cost, data quality, and performance.

.avif)