AI Observability

For Agentic AI and LLMs get insights for every model call, agent action, latency, and failure across your AI stack. From single LLM calls to multi-step agent workflows, every interaction is visible, traceable, and audit ready.

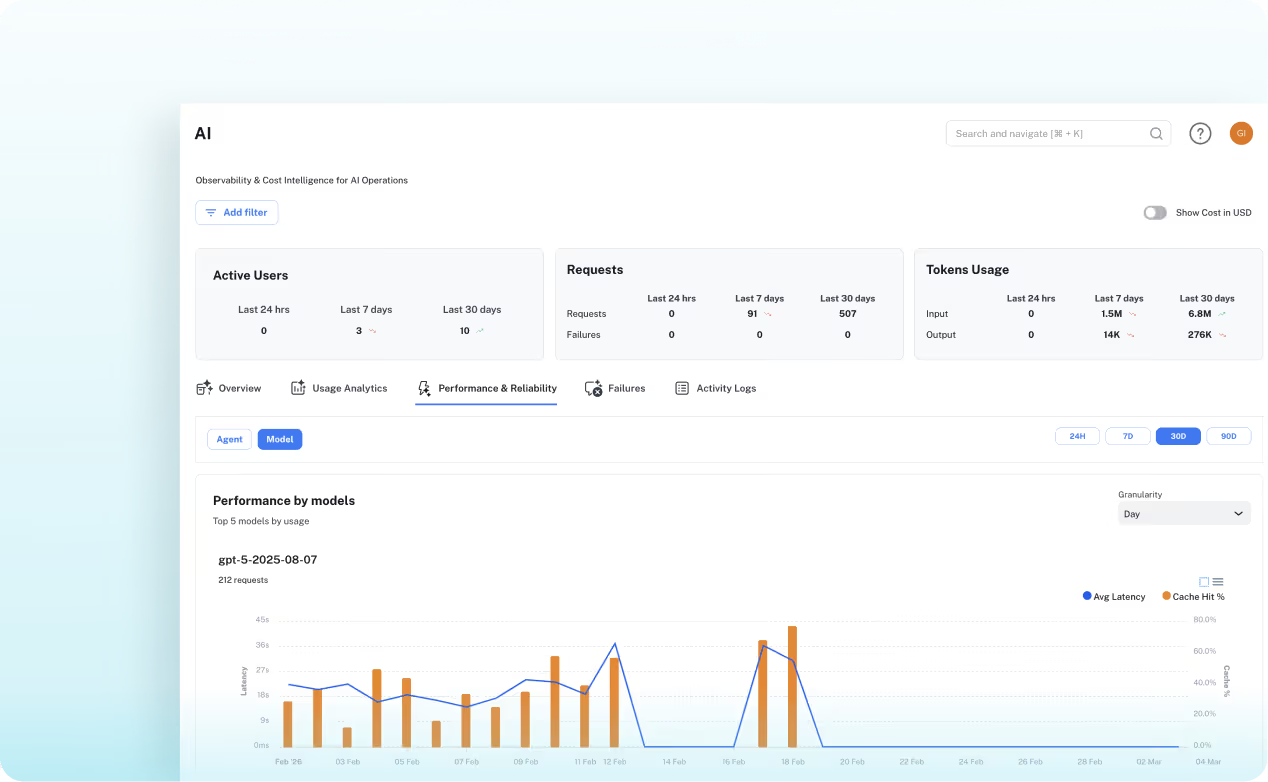

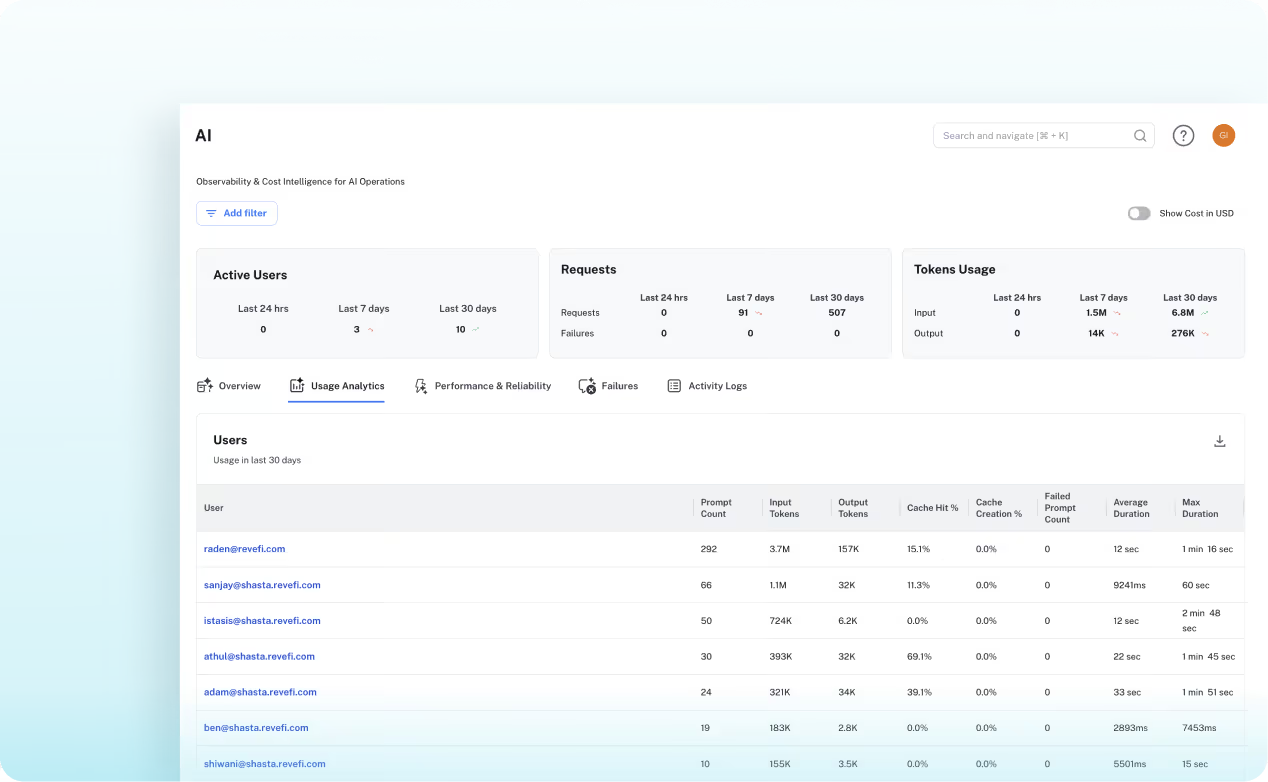

- Agentic Observability: Full user → agent → model attribution chain with per-agent latency, request volume, and complete prompt/response capture for every step

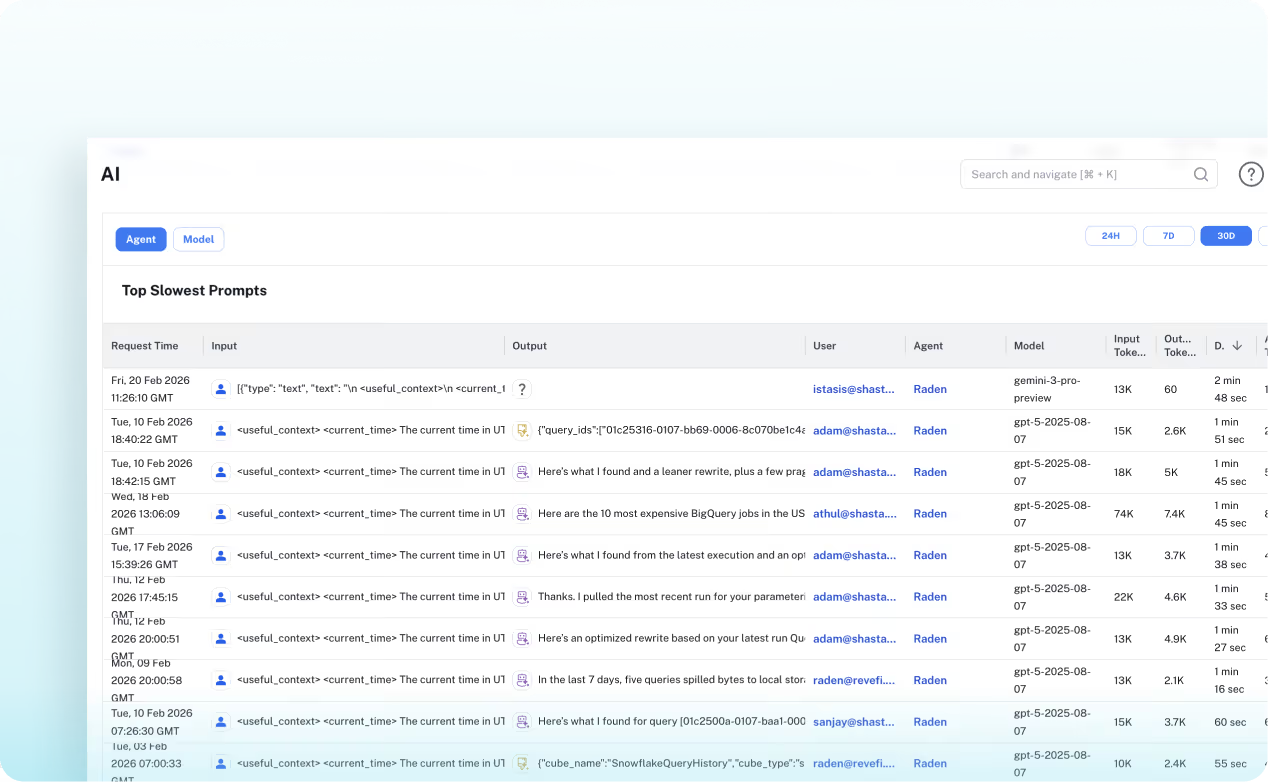

- LLM Observability: Latency benchmarking across models (GPT, Claude, Gemini), throughput metrics in tokens/sec, and failure rate tracking across providers and time windows

- Activity Logs: Searchable, filterable logs of every prompt and response — the slow query log for your AI inference layer