Optimizing Data Costs and Observability for Technology Enterprises

Revefi gives engineering and data teams continuous actionable insights into cloud data costs, pipeline reliability, and AI workload performance.

.avif)

.avif)

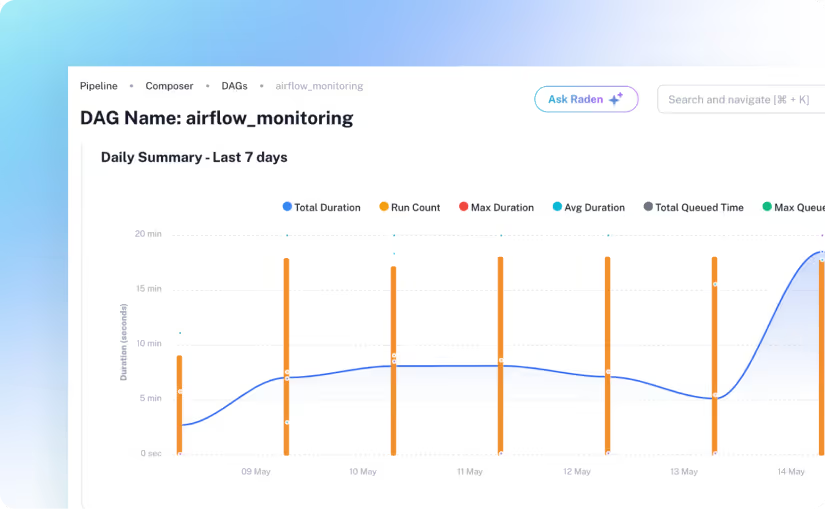

When data pipelines become slow, costly, or unreliable, the impact ripples across your entire ecosystem.

Revefi provides engineering and data teams with end-to-end visibility into cloud data costs, pipeline health, and AI workload performance. Identify inefficiencies faster, reduce unnecessary expenses, and ensure your data systems operate at peak performance.

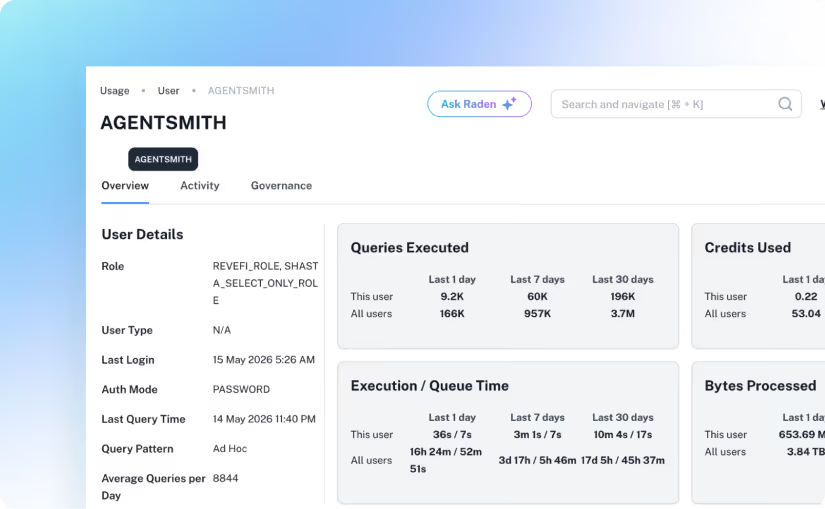

Query-level cost attribution, continuous right-sizing, idle resource detection.

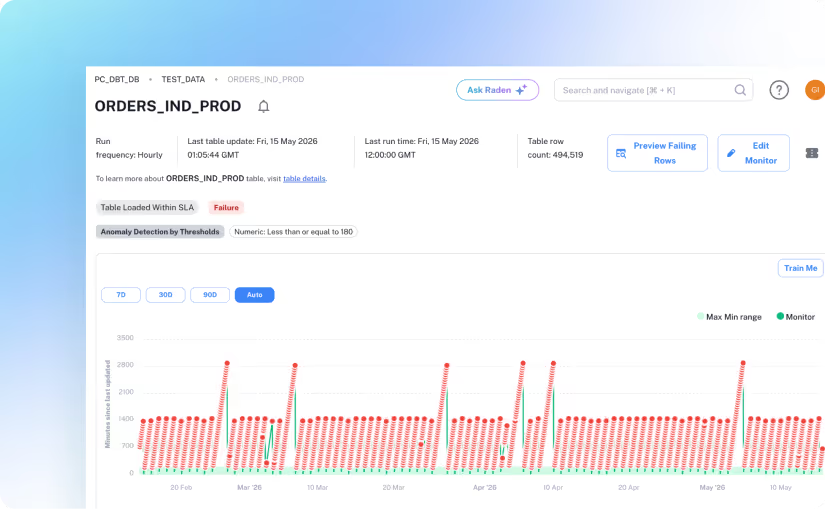

665,000+ automated monitors tracking anomalies across all connected assets.

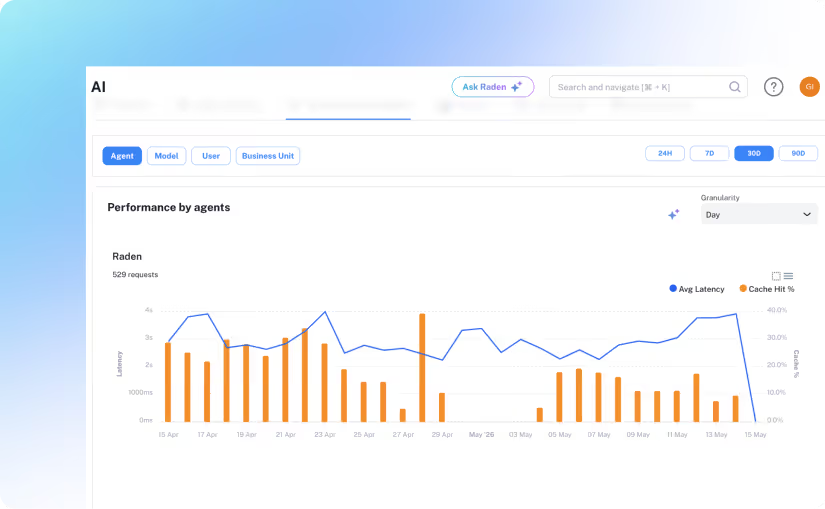

Token cost and quality monitoring from day one of a deployment.

To succeed, data teams need more than tools. They need clarity, automation, and control to tackle hidden

challenges which are slowing them down.

.avif)

.avif)

.avif)

.avif)

.avif)

Turn Challenges Into Opportunities with Better Visibility and Control

Enable intelligent, autonomous optimization to continuously improve efficiency and reliability.

Works Seamlessly with Your Existing Stack

Zero-touch, read-only integration. No agents, no pipeline changes.

"With Revefi, the value of FinOps and Data Observability became clear within just a few months. They identified savings, improved quality and more than paid for themselves in no time."

Louis DiModugno

Global CDO

Fortune 1000 Company

Read Case Study

5 Minutes

Average time to first insight.

Up to 60%

Reduction in Snowflake and cloud data costs.

100K+ Tables

Overall Automated observability without overheads.

100%

Projected annual ROI.