Today, I joined a panel of practitioners from Coca-Cola and Snowflake for the Data Science Connect webinar, "From AI to ROI: Turning Pilots Into Scalable Impact." The conversation surfaced something I hear consistently from enterprises in our customer base: technology is rarely the bottleneck. The gap between a promising AI pilot and sustained enterprise performance is almost always systemic (and almost always fixable). Here are the frameworks, patterns, and hard lessons I shared.

The Year of the Pilot Is Over. That’s Progress.

For the last two years, enterprises have been in what I’d call an "everything, everywhere, all at once" moment with AI. Every team has been spinning up a pilot, every tool has been getting an AI wrapper, and every executive has been asking why their organization isn’t further along. The result has been a lot of activity, a proliferation of siloed proof-of-concepts, and (for most organizations) a conspicuous lack of production-grade impact.

That era is ending. The question enterprises are now asking isn't "should we do AI?" It's "why is our AI not delivering?" That's a far more productive question. And it has answers.

These aren't technology failures. They are operational, organizational, and architectural failures that are largely preventable.

Why Pilots Stall: It's Systemic, Not Technical

When I look at the AI pilots that never made it to production, the root cause almost never lies in the model. It lies in the operating environment the model was expected to run in.

Pilots operate on curated, clean, static datasets in controlled lab conditions. Production systems inherit messy, high-velocity, constantly drifting data streams. That environmental gap is where projects go to die, and not because the model was wrong, but because it was right for a world that doesn’t exist at scale.

The failure patterns I see most consistently:

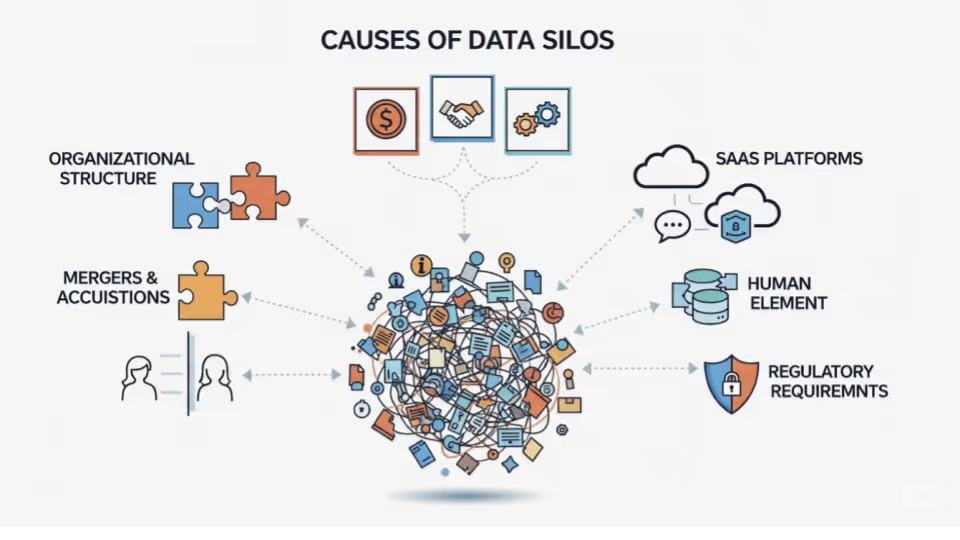

- Too many silos, too little ownership. Everyone is doing something with AI, but nobody owns the outcome. One-off projects proliferate from team to team, each chasing the latest model release, without anyone anchoring the work to a real business workflow.

- Lack of clear goals from day zero. Fear of missing out has replaced business planning as the primary driver of AI investment for many teams. Pilots that don’t start with a specific, measurable business objective don’t scale—they iterate indefinitely.

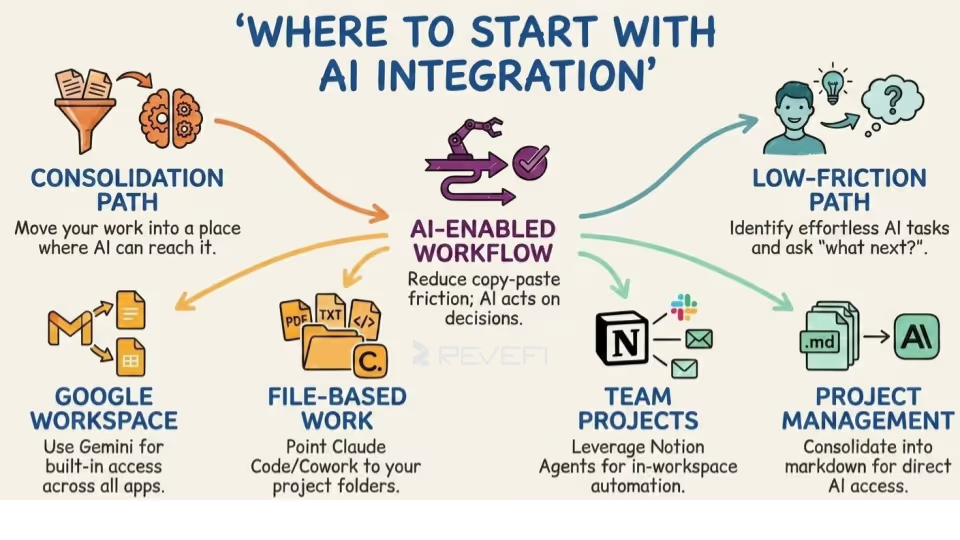

- Integration friction. AI that isn’t embedded into real workflows remains a proof of concept. The gap between a working demo and something that runs inside a live business process is enormous, and most pilots never seriously model what integration at scale looks like.

- No clear business owner. When AI remains a “data science project,” it has no constituency within the business. No one is accountable for the outcome, so when something goes wrong in production, there’s no one to fix it.

- Data readiness gap. Pilots use curated data; production relies on messy, dynamic, constantly evolving data. According to Dimensional Research, 96% of organizations encounter data quality issues when training AI models, and 85% of AI project failures trace back to data quality rather than model architecture.

- No observability from the start. Teams lack an operational mechanism to catch drift, degradation, or cost overruns once a model is deployed because monitoring is deferred to Phase 2. Phase 2 rarely arrives when there’s no production value to protect.

- Cost versus value mismatch. Scaling often increases infrastructure costs faster than it generates business value when there’s no cost attribution layer in place. Eventually, the CFO sees the bill (and the program gets cut).

"The ones having significant success started with a clear emphasis on goals, how teams are going to collaborate rather than working in silos, and picked projects that are important but aren't trying to redo their entire line of business."

A Framework for Deciding What's Worth Scaling

There are plenty of maturity models and prioritization frameworks available. Gartner has a five-stage AI Maturity Model. But the framing I find most useful in practice is simpler: three layers, each one a real question your team needs to answer before committing to scale.

The Three-Layer AI Prioritization Framework

- Business Impact. Is this a revenue driver, a cost reduction, or a productivity improvement? What is the risk profile? Be specific. "Improving efficiency" is not a business metric. A 15% reduction in claims processing time is.

- Operational Feasibility. Do you have the right data in the right state? Do you need a frontier model or a smaller, fine-tuned one? Do you have the people, tooling, and pipeline stability to operationalize this at scale?

- Sustainability. Can this use case be monitored, governed, and maintained in production with real data? What are the cost implications at 10x current volume? And critically: can the data environment that made the pilot work be replicated outside the lab?

My recommendation is to kill projects where the pilot data environment cannot be replicated in production, where success metrics are model-centric rather than business-centric, and where no one has operational accountability post-launch. Those projects will absorb resources and produce diminishing returns regardless of how well the pilot performed.

The Infrastructure Layer Most Organizations Skip

Infrastructure is having a moment right now, and it deserves one. When people talk about AI infrastructure, the conversation gravitates toward models, GPUs, and vector databases. The more important conversation is about platform availability, data pipeline reliability, and observability.

Focusing only on LLM scalability is a mistake. Think about the data platform your LLM depends on. If your Snowflake, Databricks, or BigQuery environment has reliability, performance, or cost issues at scale, your AI layer will inherit every one of those problems.

Four non-negotiables for production AI infrastructure:

- Platform availability. Availability metrics for the underlying data platform matter as much as LLM uptime. For a global enterprise, AI systems cannot be a single point of failure. This needs to be tracked with the same rigor as application uptime.

- Data pipeline reliability and quality. Every data pipeline feeding an AI system needs freshness monitoring, quality validation, and drift detection running continuously. Periodic checks are not sufficient at production scale.

- MLOps infrastructure. Model deployment, versioning, and rollback must be operationalized before you attempt enterprise scale. Gartner projects 70% of enterprises will operationalize AI using MLOps. Currently, only 54% of AI models make it from pilot to production. The gap is discipline, not tooling.

- Cost visibility and token governance. Consumption-based pricing becomes an Achilles heel without clear budget allocation and spend attribution at the workflow and team level. Token maxing is a real phenomenon: teams consuming compute at rates that bear no relationship to business value generated.

Once you're running LLMs across multiple business units and multiple providers, you need a unified layer that shows cost per workflow, per model, per team. You need to know when a pipeline feeding an LLM has drifted. You need audit trails for governance. Without that layer, scale creates opacity rather than leverage.

Getting Teams to Move in Sync

Scaling AI fails when it's treated as a technology deployment. It succeeds when it is treated as an organizational change with technology as the enabler.

The 70/20/10 rule has become one of my most useful reference points here. BCG research finds that scaling AI successfully requires 70% of effort on people and processes, 20% on technology and data, and 10% on the model. The world has inverted this ratio. Most enterprise AI investment has concentrated on models and tooling, with the expectation that people and process alignment will follow naturally. It doesn't.

What actually works:

- A shared definition of what good looks like, authored by business stakeholders rather than handed down from a data science team. Because models are changing every three months, agreeing on the direction rather than a fixed destination is often the right framing right now.

- AI engineers embedded in functional teams, not sitting in a central silo fielding tickets. The marketing and sales teams seeing genuine AI success have an AI architect embedded directly within the functional group.

- Single ownership per use case. Committee-driven AI fails at a much higher rate than use cases with a named owner. Too many decision-makers means no one is accountable when something goes wrong in production.

- Shared metrics across teams, not siloed KPIs. A Performance Observability Dashboard that both the business lead and the data engineer can see puts everyone on the same signal. When teams are measuring different things, alignment becomes a negotiation rather than a shared reality.

- Short feedback loops. Operations and engineering should be aligned on a weekly cadence, not quarterly reviews. The pace of model change demands it.

"Too many master chefs" is one of the leading causes of higher failure rates in organizations that have the talent but can't move. Common definition of success, embedded teams, shared metrics.

Governance That Doesn't Kill Momentum

Governance is the most misunderstood element of AI scaling. Weak governance destroys trust and creates compliance exposure. Heavy governance destroys velocity. The answer isn't a balance between the two. It's a different architecture entirely.

Four years ago, I made the case for the convergence of ML, data, and security governance into a single operational model. That thesis has held so far. What enterprises need now isn't more governance layers on top of existing data governance structures. They need a fundamental shift from approval-based to visibility-based governance.

Three principles that work in practice:

- Metadata-first governance. You don't need access to the data itself to govern it. You need lineage, access logs, quality signals, and cost attribution. This approach is structurally less invasive and far faster to implement than traditional data access governance.

- Automated guardrails over manual approvals. Define the rules once, enforce them continuously, and alert on exceptions. Human review belongs at edge cases, not in the critical path of every deployment.

- Tiered oversight. A demand forecasting model and a customer-facing AI agent do not require the same governance rigor. Treating every AI system identically creates bottlenecks at the wrong places.

Measuring Real ROI: Move Past the Accuracy Trap

Model accuracy is a development metric. It is not a business metric. I've seen 94%-accurate models destroy business value because accuracy was measured on a test set that didn't reflect production distribution. The metrics that actually matter at the enterprise level:

- Business outcomes measured directly: revenue impact, cost reduction, cycle time improvement, error rate reduction. Direct lines, not proxies.

- Time-to-insight. How fast does the AI system surface actionable information versus the baseline? What is the actual time reduction for the teams using it?

- Cost efficiency. Total spend on AI infrastructure per unit of business value generated. This is where most organizations are genuinely blind, especially in multi-provider LLM environments.

- Data reliability index. The percentage of time the data feeding the AI is meeting quality thresholds. A model is only as good as its inputs, and most teams aren't measuring this at all.

For organizations running LLMs at scale, the most underappreciated ROI conversation is the cost of unobserved LLM deployments. Without financial attribution at the workflow and team level, LLM costs scale faster than business value. Observability in this context is directly a cost control mechanism, not just a reliability tool.

How Roles Are Shifting

When AI becomes a core operating capability, it doesn't just add new boxes to the org chart. It redistributes accountability. I see this playing out in two stages.

In the near term, we're seeing role expansion and creation of specialized roles. Data engineers and architects are taking on AI infrastructure ownership, responsible not just for pipelines but for the data contracts that AI systems depend on. Product managers are gaining AI literacy requirements. Business operations leads are becoming primary consumers of AI observability because they need to know when AI is underperforming before it affects customers. And the CDAO role is expanding rapidly.

In the medium term, we're heading toward complete organizational redesign. Large companies are exploring flattening team structures and equipping smaller, AI-empowered groups to do what previously required much larger teams. That transformation will take a few more years and depends on the next generation of AI infrastructure to support it.

The cultures that treat a failing model as a management problem rather than a learning signal will perpetually lag in AI maturity. Pilots fail. Models drift. That is not a crisis. It is information. The organizations building durable AI advantage, have normalized this and invested in the infrastructure to detect failures fast, understand why, and iterate.

Sustaining AI Value: Observability Designed In, Not Bolted On

The most dangerous moment in AI deployment isn't launch day. It happens 90 days later, when the model has been stable long enough for teams to stop watching it, but early enough that data drift hasn't fully manifested. The organizations that sustain value have continuous observability running before they stop paying active attention.

The practices that consistently work:

- Drift detection as a default. Every model in production should have automated alerts for input data distribution shift, not just output accuracy degradation.

- Cost trend monitoring. AI infrastructure costs compound quickly. Automated cost alerting is the operational standard for teams running at scale, not a nice-to-have.

- Structured feedback loops. A lightweight, regular process for business stakeholders to surface model behavior concerns to technical teams. Weekly async cadence, not quarterly reviews.

- Version and rollback discipline. The ability to compare current model performance against a baseline and roll back within hours, not weeks.

- Operations rigor with AI observability. Tracking value over time, not just at launch. Value tracking is an ongoing practice, not a post-pilot report.

Key Takeaways

Don't spend quarters or years debating AI strategy. The enterprises winning on AI in 2026 are the ones that identified targeted use cases tied to real business outcomes, made their data AI-ready before building, and designed observability and governance from day one rather than treating them as future-state items.

The three-layer framework is simple, but it requires discipline. Is the business impact clear? Is the build operationally feasible? Is the use case sustainable in production with real data, real governance, and real cost accountability?

If the answer to all three is yes, scale it. If not, and especially if the pilot data can't survive contact with production reality, cut it and redirect resources toward something that can.

"Start with targeted use cases with clear outcomes. Embed cost optimization, observability, and governance capabilities from day zero. Design it in. Don't bolt it on."

You can watch the entire webinar on demand here: From AI to ROI: Turning Pilots Into Scalable Impact | Webinar Replay