Yesterday, Snowflake announced the research preview of Project SnowWork, referred by Snowflake as an “autonomous enterprise AI platform” intended to help business users execute complex, multi-step workflows without relying on data teams. The use cases are straightforward: a CFO asks for a revenue review and gets a slide deck with variance analysis. Sales Ops requests a pipeline risk brief and gets a rollup with prioritized risks. FP&A asks for a close narrative and gets a draft grounded in KPIs. Marketing asks for budget reallocation recommendations and gets a stakeholder memo with supporting metrics.

The underlying premise is that AI agents can eliminate the gap between business intent and finished, data-driven output compressing work that takes days into minutes.

That's a reasonable ambition. But it rests entirely on one assumption that Project SnowWork itself cannot validate: that the data the agents act on is correct and there is ROI.

What Project SnowWork Is and Isn't

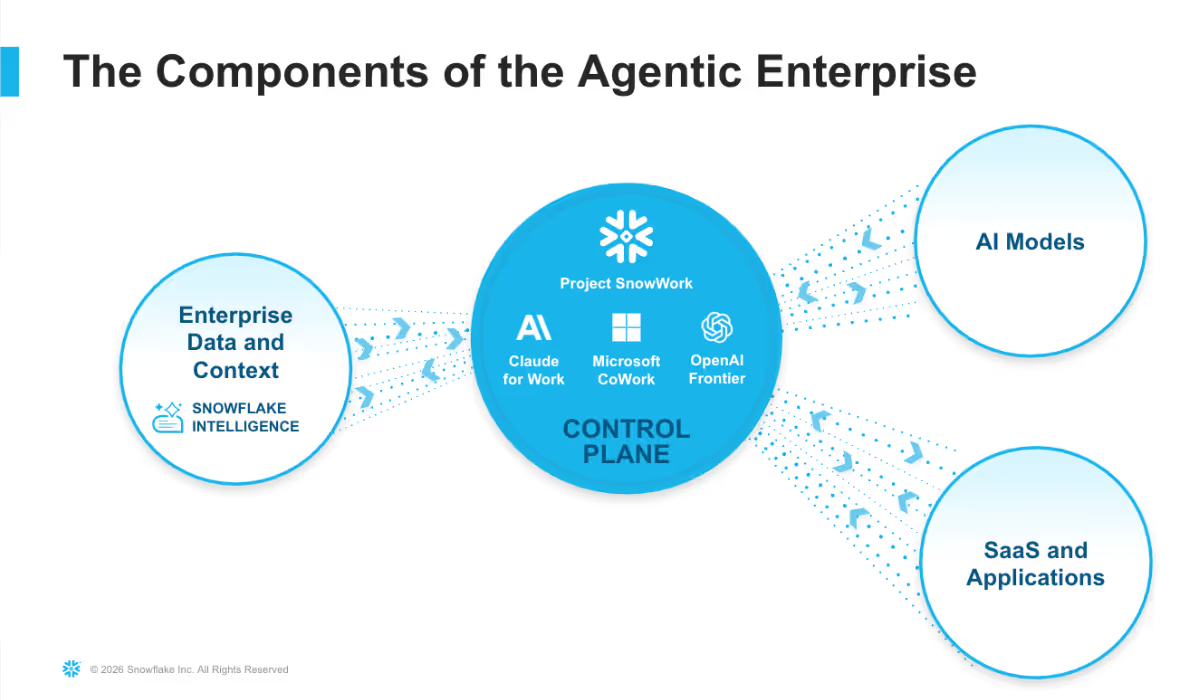

Snowflake's marketecture for the Agentic Enterprise includes four pillars:

- Enterprise Data & Context, the governed foundation that grounds AI decisions in a shared understanding of the business

- AI Models, reasoning engines that generate analysis, predictions, and recommendations

- SaaS & Applications, enterprise systems where operational actions are executed

- The Control Plane, the coordination and governance layer that translates intelligence into authorized action

Snowflake positions Enterprise Data & Context as the foundation everything else depends on. They're right. But Project SnowWork is an execution layer that assumes the data foundation is healthy. It has no mechanism to verify that assumption continuously. That gap is real, and it matters.

The Gap: What Happens When the Data Is Wrong

When you consider each of Snowflake's own use cases under realistic data conditions the reality is a bit different:

- Sales Ops asks for a pipeline risk brief with root-cause diagnostics but the CRM sync that feeds Snowflake has been silently broken for two days and deal stage data is stale.

- FP&A asks for a variance explanation grounded in governed KPIs but the revenue metric was redefined in one table and not updated in another three weeks ago.

- Marketing asks for CAC and pipeline influence data across the last four weeks but a tagging change in the ad platform caused three days of attribution data to drop.

- A CFO gets an automated close narrative for quarterly reports but an upstream pipeline failure means actuals are still reflecting last month's numbers.

In each case, Project SnowWork may execute confidently. The agent has no way to know the data is wrong. The control plane enforces access policies and governance rules, but it doesn't validate data freshness, consistency, or accuracy. The output looks authoritative, a polished slide deck, an executive brief, a stakeholder memo and the error propagates directly into a high-stakes business decision.

This is the central risk of autonomous execution at scale: agents amplify data quality failures rather than catching them. In a manual workflow, a bad number surfaces in a meeting. In an agentic workflow, it ends up in a board presentation.

Where Revefi Fits

Revefi addresses the gaps that Project SnowWork's architecture leaves open.

Cost and Performance Optimization

Project SnowWork removes friction for users to trigger complex Snowflake workflows on demand. The side effect is that compute consumption becomes harder to predict and attribute. When every analyst, Sales Ops manager, and marketing leader can autonomously initiate multi-step analytical workflows, unoptimized queries, redundant workloads, and oversized virtual warehouses accumulate without a clear owner.

Revefi provides the controls organizations need to manage this:

- Warehouse rightsizing that ensures agentic workloads run on appropriately sized infrastructure.

- Workload-level cost attribution to understand which agentic workflows and business functions are driving Snowflake consumption, so costs can be governed proactively rather than discovered retroactively.

- Query optimization to identify inefficient query patterns generated by agents before they become chronic cost drains.

- Spend anomaly detection to alert teams when costs spike unexpectedly, enabling fast remediation.

The economics of autonomous execution only work if consumption is visible and governed. Without this layer, Project SnowWork's scale ambitions and the CFO's Snowflake bill are on a collision course.

Continuous Data Quality and Observability

Revefi monitors pipelines, tables, and metrics in real time, detecting anomalies, schema changes, freshness violations, and distribution shifts before they reach downstream systems. When Project SnowWork queries a Snowflake dataset to generate a CFO revenue review, Revefi has already validated whether that data is fit for the purpose. Without this layer, "governed data" is only as trustworthy as the last manual audit which in most organizations is neither continuous nor comprehensive.

End-to-End Data Lineage

When an autonomous agent produces a budget reallocation memo or a close narrative, someone will ask: where did this come from, and can we verify it? Project SnowWork enforces access controls and audit logging at the query layer. That's necessary but not sufficient. Revefi provides full lineage from source to consumption tracing every data asset that contributed to an agentic output. This is what makes "enterprise trust by design" a verifiable claim rather than a product positioning statement.

Proactive Incident Detection

The control plane determines whether an action should occur and under what constraints. It has no visibility into whether the data supporting that action is healthy. Revefi surfaces data incidents, broken pipelines, unexpected nulls, metric divergence, freshness violations with business impact context, before an agent acts on them. For time-sensitive workflows like weekly close narratives or real-time pipeline triage, catching a data failure before agent execution is the difference between a credible output and a costly one.

Monitoring Agent Behavior

This is where Revefi's role extends beyond the data layer into something more fundamental for the agentic era. Data observability asks: is the data correct? AI observability asks: is the agent behaving correctly? Both questions need answers.

As Project SnowWork agents execute multi-step workflows autonomously, a new category of operational questions emerges that traditional monitoring doesn't address:

- Are agents completing tasks within expected latency and quality thresholds?

- Are outputs drifting over time as underlying data distributions shift?

- When an agent produces an unexpected result, which step in the workflow caused it?

- Are persona profiles performing consistently across functions, or are some systematically underperforming?

- How does agent behavior change when the data it queries degrades in freshness or completeness?

Revefi's AI observability capabilities provide instrumentation at the agent level monitoring inputs such as prompts, intermediate steps, outputs, and the data context each agent operated in. This allows organizations to detect, diagnose, and resolve agentic failures, not just data failures. It closes the final accountability gap in autonomous execution: you can verify the data going in, and you can verify the agent behavior coming out.

The Stakes Are Higher Than They Appear

Snowflake has identified that use cases are high-stakes: executive revenue reviews, narratives for leadership, campaign budget decisions, pipeline briefs for sales leadership. These outputs drive resource allocation, investor communications, and organizational strategy. They're not reports that sit in a static dashboard, they're inputs to decisions with real consequences.

That's precisely the problem. The more consequential the output, the more costly a silent data or agent failure becomes. A pipeline brief built on stale data doesn't just waste time, it sends sales leadership in the wrong direction. A close narrative with an inconsistent revenue metric doesn't just look bad, it undermines CFO credibility at the board level.

Project SnowWork's value proposition is speed and autonomy. Revefi's value proposition is ensuring that speed and autonomy don't come at significant cost, poor accuracy and lack of accountability.

The Bottom Line

Project SnowWork is a reasonable attempt to bring autonomous AI execution to business users on top of Snowflake's data platform. Snowflake has to be commended for it. The use cases are real. The gaps, however, are also real and they sit precisely in the layers that determine whether agentic outputs can actually be trusted and sustained at enterprise scale.

Revefi addresses those gaps directly: continuous data quality monitoring, end-to-end lineage, proactive incident detection, cost and performance optimization, and AI observability. Together, these capabilities turn a promising research preview into something an enterprise can actually rely on.

Project SnowWork will likely move work faster. Revefi makes sure that work is right.

Want to see how Revefi integrates with your Snowflake environment to support your AI readiness? Contact us.

.avif)