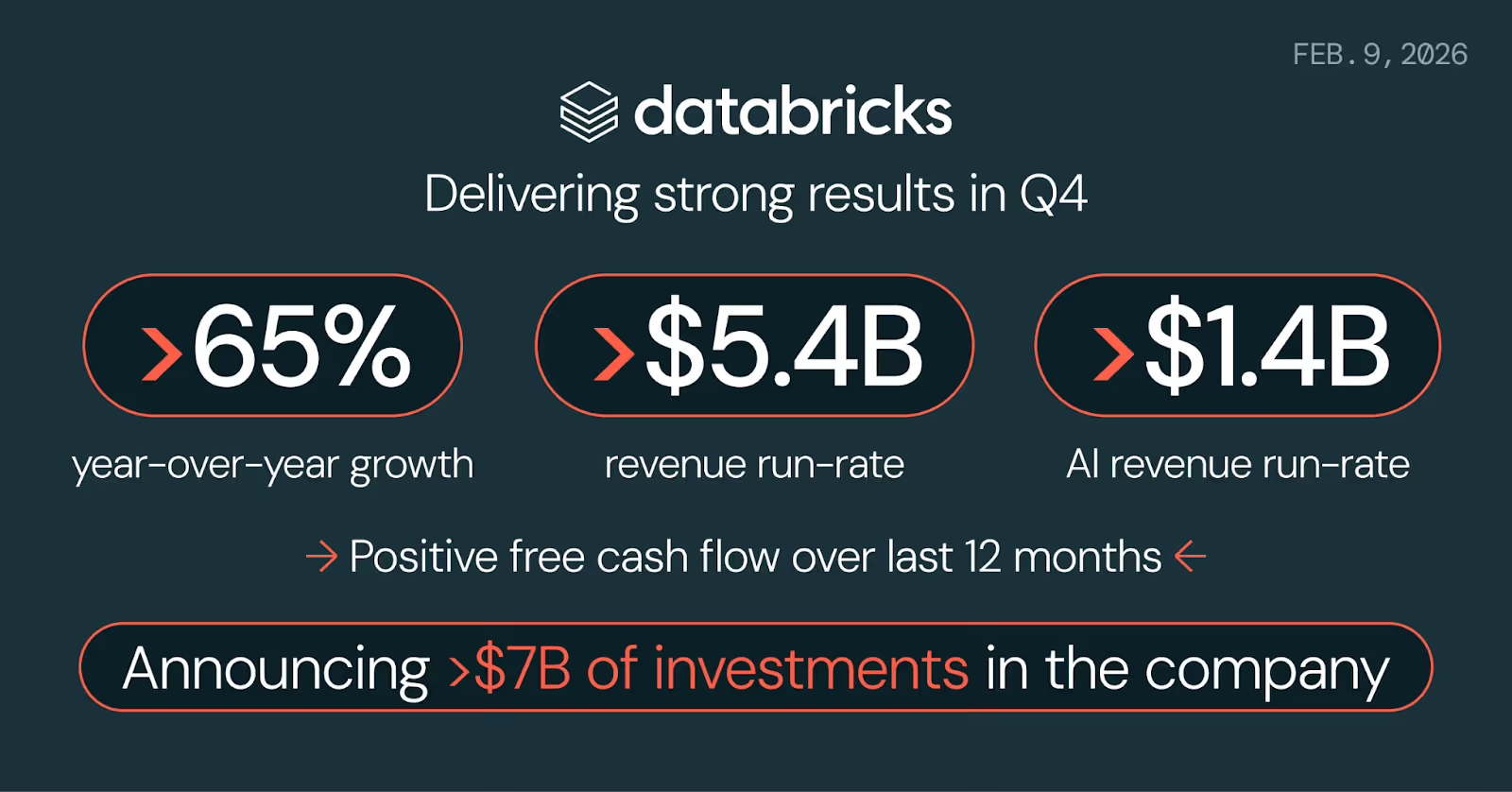

Databricks reported another strong quarter, surpassing a $5.4 billion annual revenue run rate in Q4, up more than 65% year over year. Just months earlier, it crossed $4.8 billion, signaling sustained enterprise adoption rather than a one-time spike. The continued growth highlights how deeply organizations are embedding Databricks into their data engineering, analytics, and AI strategies.

The company is also closing over $7 billion in new funding, including approximately $5 billion in equity at a $134 billion valuation and about $2 billion in debt capacity. Investors include JPMorganChase, Microsoft, Goldman Sachs, Morgan Stanley, and Qatar Investment Authority. This momentum underscores growing enterprise demand for unified data and AI platforms at scale.

The Numbers Behind the Headline

Beyond headline revenue growth, Databricks reported several key operating metrics that highlight accelerating enterprise adoption of its data and AI platform.

- AI revenue run rate exceeds $1.4 billion, signaling that enterprise AI initiatives are moving from pilot projects into full-scale production environments.

- Net revenue retention is above 140%, showing that existing customers consistently expand their usage year over year.

- 800+ customers spend more than $1 million annually, with 70+ exceeding $10 million in annual spend, reflecting strong enterprise-scale deployments.

- The company generated positive free cash flow over the past 12 months, reinforcing financial strength and operational efficiency.

Today, more than 20,000 organizations rely on Databricks for mission-critical data and AI workloads, including Adidas, AT&T, Bayer, Mastercard, Rivian, and Unilever, as well as over 60% of the Fortune 500.

What the New Funding Is For

Databricks is doubling down on innovation with strategic investments in two high-impact areas designed to expand enterprise AI adoption.

Lakebase:

A serverless PostgreSQL database built specifically for AI agents, which is engineered to support operational workloads directly within the Databricks platform. As enterprises deploy agentic AI applications at scale, they require integrated, production-ready databases that operate natively inside their data ecosystem. Lakebase addresses this need by combining transactional capabilities with lakehouse architecture.

Genie:

A conversational AI assistant that enables business users to query enterprise data using natural language, eliminating the need for SQL expertise or analyst support. This democratizes access to insights and extends the value of the lakehouse beyond data and engineering teams.

Together, these investments reflect Databricks’ vision of enterprise AI: intelligent systems embedded across the organization, empowering both technical and non-technical users alike.

Growth Brings Its Own Problems

As Databricks adoption accelerates, operational complexity increases across cost management, performance optimization, and data quality governance.

Rising Databricks Costs in Consumption-Based Pricing

Databricks’ consumption-based pricing model offers flexibility but makes cloud spend harder to predict. As data pipelines expand and more users adopt tools like Genie, compute usage can rise quickly without clear attribution to specific teams or workloads. Natural-language queries from non-technical users may trigger significant compute resources without visibility into cost impact. Meanwhile, Mosaic AI workloads (model training, fine-tuning, and inference) introduce variable, often spiky expenses that are difficult to forecast.

Performance Degradation at Scale

Over time, pipelines may miss SLAs due to data growth, schema changes, or outdated cluster configurations. AI model training runtimes and inference latency add further complexity, often without centralized monitoring.

Data Quality Risks in AI Workloads

Schema drift, late-arriving data, and silent pipeline failures can propagate inaccurate insights. For conversational AI and machine learning models, poor data quality directly impacts trust, reliability, and business outcomes.

Where Revefi Fits In

Revefi monitors Databricks environments across three areas: cost, performance, and data quality with specific coverage for Genie and Mosaic AI workloads.

On the cost side, it tracks consumption at a granular level so teams can see what's driving spend whether that's a runaway pipeline, a surge in Genie usage from a particular team, or a Mosaic AI training job that ran longer than expected. The goal is to surface that information before it becomes a billing surprise.

On performance, Revefi monitors pipelines and flags degradation when it happens. For Genie, that means tracking query response times and identifying the underlying bottlenecks. For Mosaic AI, it covers training job efficiency and inference latency. Most teams currently piece this together from logs and dashboards across multiple tools; Revefi tries to consolidate that into a single view.

On data quality, it watches for anomalies, freshness issues, and schema changes across pipelines, i.e., the kinds of things that, if caught early, are a minor fix, and if caught late, turn into a multi-day incident. Given how much Genie and Mosaic AI depend on reliable data, having automated detection here tends to matter more as those workloads scale.

Learn more at revefi.com or request a demo.