Cloud Cost Management Challenges Currently Faced by DBAs, and Data Teams

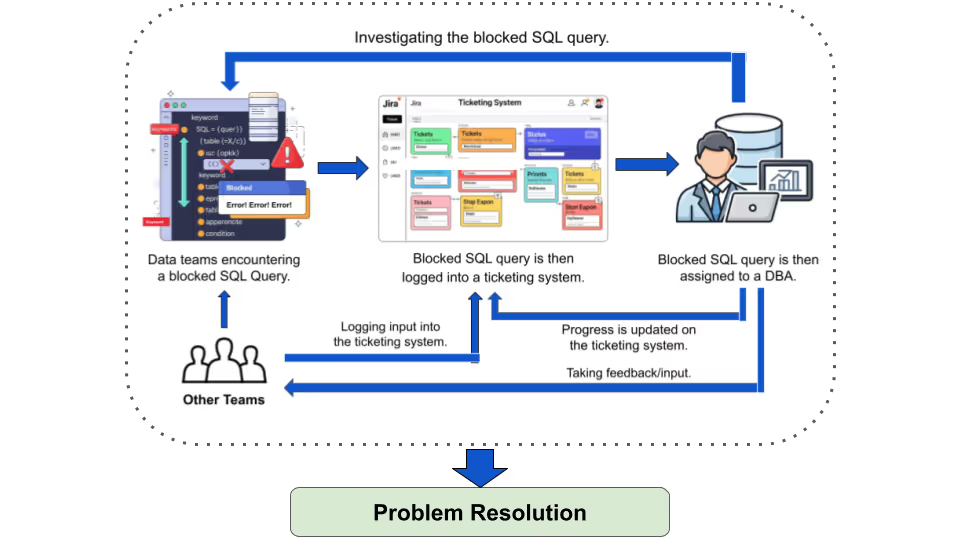

Traditionally, database performance issues have been managed by DBAs and data engineers using manual methods such as query logs, performance monitors, and custom scripts. The process typically involves identifying a slow query through alerts, analyzing logs for the root cause, and then applying fixes like:

- Index tuning

- SQL rewrites

- Resource adjustments.

In large-scale environments, this cycle can take hours or even days to resolve. And in the case of ticket-based workflows, things are further slowed down!

Legacy database performance tools lack real-time query monitoring, providing insights only after disruptions have already impacted workloads.

Manual troubleshooting leads to inconsistency, since different DBAs may handle the same cloud database performance problem in different ways.

As data volumes grow, this ticket-driven approach makes it difficult to maintain scalable SQL query optimization and ensure efficient data warehouse operations.

As data volumes continue to grow, scaling this manual, ticket-driven approach becomes unsustainable.

The result is slower decision-making, increased operational risk, and reduced agility in responding to fast-changing market conditions, ultimately jeopardizing business continuity!

The Need for Actual “Real-Time” Solutions

Given these challenges, data teams crave "real-time" solutions that monitor, alert, and remediate without human intervention.

Real-time implies zero lag in detection and action, turning reactive processes into proactive ones.

This shift is essential for maintaining business agility, especially in industries like FinTech (where milliseconds matter with respect to fraud detection, and reconciliation compliance), or e-commerce (where real-time inventory analytics prevent stockouts).

Enter AI-powered agents, which promise to automate these tasks by learning from patterns to predict and prevent issues. By integrating AI into DataOps, organizations can achieve continuous improvement by reducing manual overhead, and enhancing reliability.

Revefi’s AI Agent (RADEN)

Unlike traditional tools, RADEN is an agentic AI built to address challenges faced by traditional data observability and monitoring tools.

This means it’s capable of reasoning, planning, and acting independently. It installs in minutes without accessing sensitive data, using metadata for analysis, ensuring compliance and security.

RADEN's core strength lies in its three-layer framework:

1. Observability: It collects real-time telemetry on query stats, warehouse utilization, and storage metrics, which enables it to handle millions of events daily. This enables instant anomaly detection, such as spotting a query spike causing cost overruns.

2. Prediction: In prediction, RADEN employs AI to forecast usage patterns, using multivariate time series and reinforcement learning to simulate optimizations. It predicts future costs and alerts on potential issues, like impending storage bloat.

3. Automation: Automation is where RADEN truly shines! It resizes warehouses dynamically, pauses idle resources, optimizes queries by suggesting partitioning or caching, and manages storage through archival policies. For example, in Snowflake, it adjusts virtual warehouses based on load.

RADEN learns adaptively from outcomes, refining decisions over time, and supports multi-cloud environments. Features include automated FinOps for budgeting, data observability for quality checks, and performance tuning to reduce query times.

Benefits of RADEN

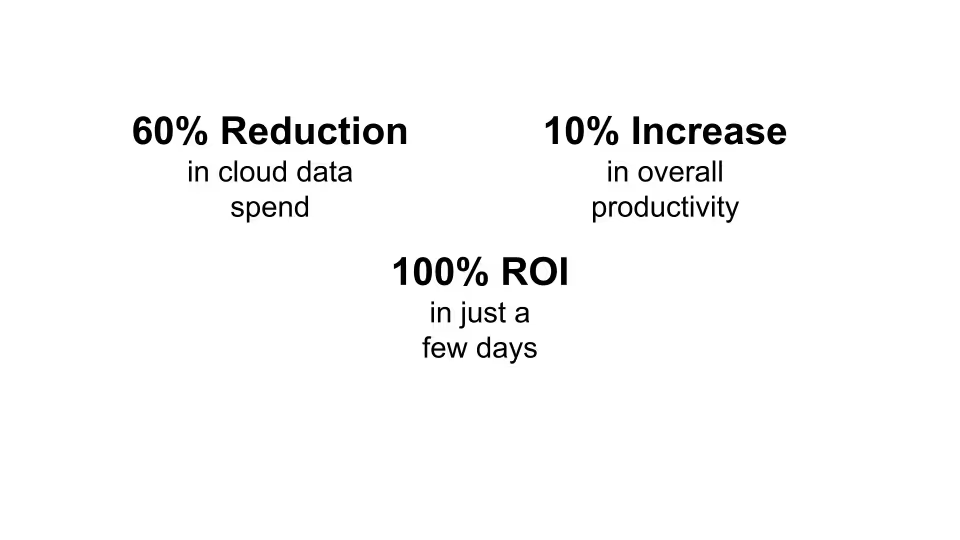

The outcomes are transformative. Businesses report reductions in cloud data costs, with operational efficiency improving 10x. Quicker insights come from faster query resolutions, while reduced costs stem from eliminating waste like idle resources or inefficient queries. Productivity soars as teams shift from firefighting to innovation.

Real users highlight scalability without cost spikes, enhanced security through 24/7 monitoring, and greater than ROI within days.

Example:

For an e-commerce platform using Snowflake, RADEN detects and remediates a blocked query during Black Friday, preventing downtime and saving thousands in lost sales.

Conclusion: A Future of Streamlined Data Operations

As data continues to grow exponentially, solutions like RADEN are essential for navigating complexities without sacrificing speed or cost. By providing real-time monitoring, alerting, and remediation, Revefi's AI agent eliminates delays, empowering data teams to focus on what matters: driving business growth.

The shift to AI-powered DataOps isn't just an upgrade, as it's a necessity for thriving in an increasingly data-centric world.

With tools like RADEN, the future of data warehouses looks efficient, resilient, and innovative.