How agentic AI is transforming enterprise data management from reactive assistant to autonomous agent that reasons, plans, and acts.

We are at an inflection point in enterprise AI. Agentic AI systems that can autonomously reason, plan, and take action have moved from hype to production reality. Yesterday, I participated in a live panel titled "The Agentic Shift: Designing AI That Can Reason, Plan, and Act," exploring what this transformation really means for data teams, data engineers, and the organizations they support. Here are my key takeaways.

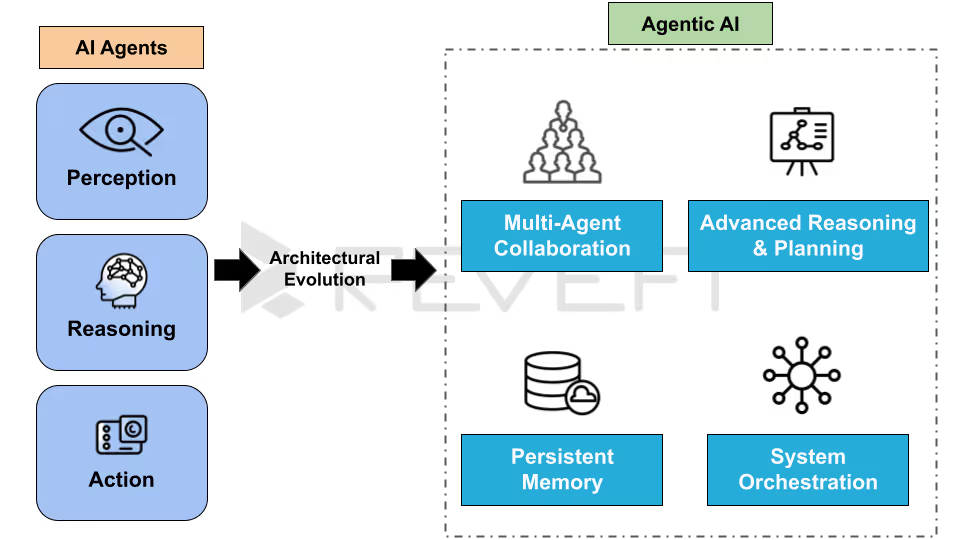

What Is Agentic AI and How Is It Different from Traditional AI Assistants?

Agentic AI describes AI systems that autonomously pursue defined goals over time. These systems continuously assess their environment, reason about changing conditions, create multi-step plans, and execute tasks rather than simply reacting to single prompts. Unlike traditional large language model (LLM) assistants or AI copilots that operate on a request-by-request basis, agentic AI maintains contextual awareness, measures results, and dynamically adjusts its actions without requiring new instructions at every stage.

A traditional AI tool answers questions. An autonomous AI agent gets things done.

Here’s a real-world example from our work here at Revefi. If you ask a standard AI assistant or a Snowflake Bot why your Snowflake costs increased this week, it may generate a reasonable explanation based solely on the information you provide. However, an agentic AI solution like Revefi’s RADEN takes it several steps further.

It directly analyzes your data warehouse metadata, reviews recently executed workloads, detects that a particular query started performing full table scans after a clustering key was removed, and then either remediates the issue automatically or clearly identifies the exact corrective action required.

This combination of goal-oriented execution and sustained follow-through is what truly defines the agentic AI shift.

What Does It Actually Take to Build Reliable Agentic AI for Production?

Modern large language models (LLMs) demonstrate impressive reasoning capabilities in controlled demos. The real challenge is making that reasoning dependable enough for production environments that manage live data pipelines and cloud infrastructure. Delivering production-ready agentic AI requires a disciplined engineering approach built on three core principles.

First, maintain a tightly defined scope. AI agents designed to handle overly broad responsibilities tend to behave unpredictably. In contrast, narrowly focused agents (such as those built for cost anomaly detection, query performance degradation analysis, or schema change impact assessment) consistently deliver stronger reliability, transparency, and auditability than general-purpose systems.

Second, ensure every step is verifiable. Each action an autonomous AI agent performs must have a measurable and testable outcome. For example, if the agent modifies a warehouse configuration to improve performance or reduce costs, the system must confirm that the expected improvement occurred before advancing to the next step.

Third, design a controlled tool surface. While ReAct-style reasoning loops and orchestration frameworks like LangGraph enable effective stateful AI workflows, the real advantage comes from precisely defining which tools the agent can access, and explicitly restricting those it cannot. This structured control is essential for building trustworthy, enterprise-grade agentic AI systems.

What Infrastructure Does Agentic AI Require for Enterprise Data Management?

For enterprise data management use cases (which includes data engineering, pipeline observability, Cloud FinOps, and DataOps), three foundational infrastructure components are essential to deploy effective autonomous AI agents in production environments.

Metadata access:

Autonomous agents require real-time visibility into the data platform to operate accurately. Without live operational context, an agent is effectively making assumptions rather than data-driven decisions. Platforms like Revefi integrate directly with major cloud data warehouses such as Snowflake, Databricks, Google BigQuery, and Amazon Redshift, continuously ingesting telemetry and metadata to provide agents with accurate situational awareness.

Persistent memory:

Enterprise-grade agentic AI systems must retain historical context. If an agent previously analyzed a Snowflake cost spike and traced it to a specific team’s batch processing job, it should recall that insight when a similar pattern appears again. This retained institutional knowledge enables autonomous agents to improve decision-making accuracy and efficiency over time.

Closed feedback loops:

After executing any action, the AI agent must validate whether the intended outcome was achieved (such as if cloud spend declined, query performance improved, or a data quality issue was resolved). Autonomous intervention without measurable outcome verification introduces operational risk. True agentic AI depends on continuous monitoring, validation, and iterative optimization.

How Should Teams Rethink Human-in-the-Loop When AI Agents Act Autonomously?

As agentic AI becomes more capable of independent reasoning and action, organizations must redefine the human-in-the-loop model. Instead of requiring approval for every automated step, enterprises should shift toward exception management and policy-based governance. Reviewing every autonomous decision does not scale, and over time, constant approvals reduce overall agentic AI attention and effectiveness.

For reversible, low-risk actions (such as pausing an underutilized warehouse, tuning query optimization parameters, or resizing compute resources), AI agents should operate autonomously within clearly defined thresholds. Human oversight is most valuable at the policy level; setting budget guardrails, defining performance SLAs, and establishing acceptable risk parameters.

However, higher-impact actions that directly modify data assets (such as altering tables, updating pipelines, or changing dependencies that affect downstream systems) should require human review until the AI agent demonstrates a consistent, auditable track record within that domain or function.

The guiding principle for responsible AI governance is clarity.

Teams must be able to easily understand why an agent made a decision, what data informed it, and how confident the system was in its conclusion. In production AI environments, explainability matters more than rigid approval workflows. Transparency builds trust, and trust enables greater autonomy!

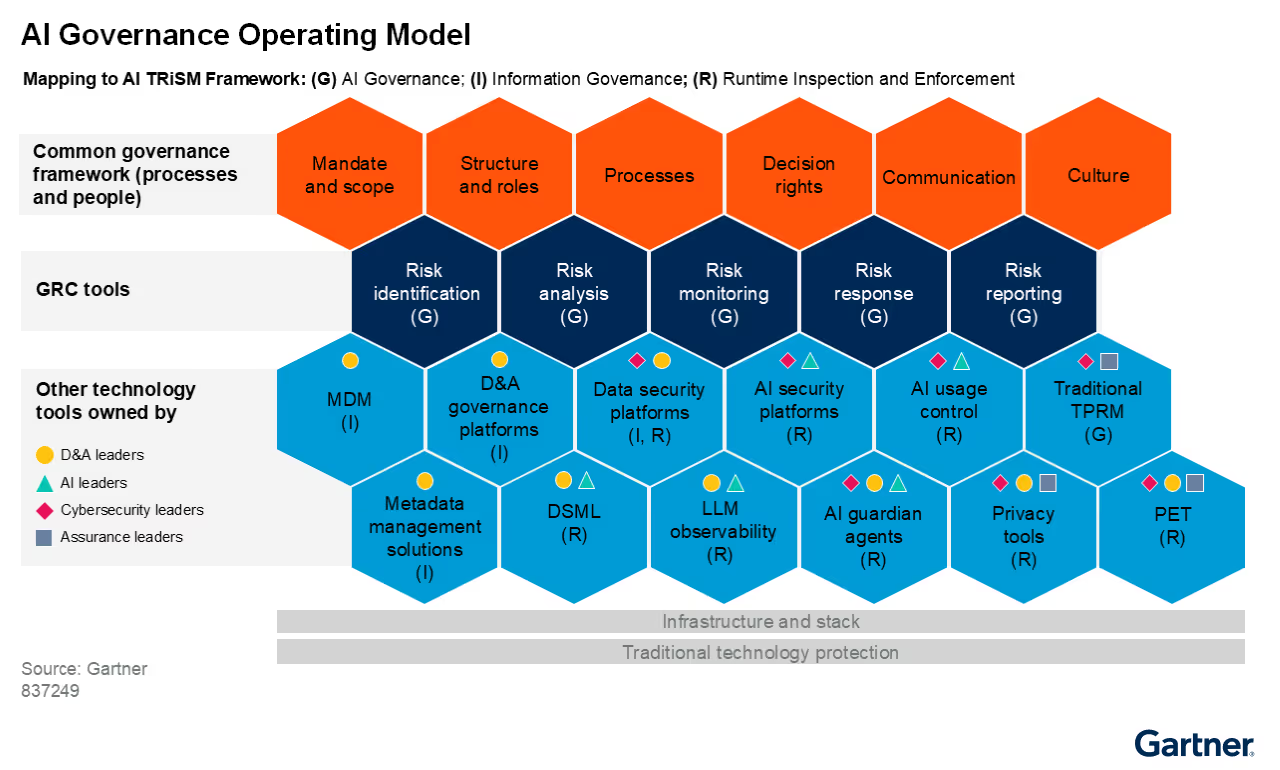

How Do You Govern and Audit Autonomous AI Agents in Enterprise Environments?

Autonomous AI agents invert traditional governance models. Instead of approving actions before they happen, enterprises need to trace, explain, and audit AI actions in real time after they execute.

Audit trails for agentic AI must capture not just what the agent did, but why. At Revefi, every autonomous agent action is tied to a full reasoning chain. If the agent terminated a Snowflake query running for six hours and consuming a disproportionate share of warehouse credits, the audit log records what triggered the investigation, what data the agent examined, what it concluded, and what it did.

AI governance must also define agent permissions upfront: what it can and cannot touch, under what conditions, and who is notified when it acts on production systems. These constraints must be codified before deployment not discovered after something unexpected happens.

Where Is Agentic AI Already Delivering ROI in Enterprise Data Teams?

One of the most immediate and measurable returns on investment (ROI) from agentic AI in enterprise data environments is cloud cost optimization, particularly across platforms like Snowflake, Databricks, and Google BigQuery. Cloud waste is both common and correctable; mis-sized warehouses, poorly optimized queries, and compute resources running longer than necessary. These are precisely the types of well-defined, repeatable problems that narrowly scoped autonomous data agents can resolve effectively.

Revefi’s customers, which includes Verisk, AMD, and Stanley Black & Decker, have achieved Snowflake cost reductions ranging from 30% to 70%.

Organizations that realize these results most quickly are those that empower AI agents with meaningful operational autonomy, rather than funneling every optimization recommendation through manual approval workflows.

Automated data quality management is another high-impact application. When autonomous AI agents continuously monitor tables and data pipelines for freshness gaps, schema modifications, and row-count anomalies, data engineering teams can redirect their focus toward strategic, higher-value initiatives.

Across production deployments, a consistent pattern emerges: clearly defined, measurable objectives (such as reducing cloud spend, maintaining SLAs, or detecting anomalies) drive significantly stronger outcomes than broad or ambiguous goals.

What Are the Emerging Agentic AI Design Patterns for the Next 12–24 Months?

Over the next one to two years, several enterprise-focused agentic AI design patterns are expected to mature and become standard practice.

Multi-agent orchestration will likely emerge as the dominant architectural model. Instead of relying on a single AI agent to manage an entire workflow end-to-end, organizations will deploy specialized agents that collaborate in sequence. For example, one agent monitors for anomalies, another performs root cause analysis, and a third executes remediation. This modular approach improves reliability, simplifies auditing, and enhances performance because each agent operates within a narrowly defined scope.

Agent identity and access management (AI IAM) will also become a critical enterprise capability. Many organizations currently lack structured processes to provision, monitor, and revoke AI agent permissions with the same rigor applied to human employees. As autonomous agents perform increasingly sensitive actions across platforms like Snowflake and Databricks, formal governance, audit trails, and role-based access controls will become mandatory.

Finally, outcome-driven learning within specific environments will define long-term value creation. The most effective autonomous AI agents will not simply rely on generalized training data; they will accumulate institutional knowledge about an organization’s unique data architecture, workload behaviors, and cost patterns. An AI agent operating within a Snowflake environment for several months should develop a far deeper contextual understanding than a newly deployed system, thereby enabling progressively smarter optimization and decision-making over time.

How Should Data Teams Prepare for Agentic AI Today?

The most important foundation for successful agentic AI deployment is clean, accessible metadata and telemetry. Autonomous AI agents are only as good as the context they operate in. Fragmented data, missing lineage, and unmonitored pipelines directly degrade agent decision quality.

The broader data management and analytics ecosystem is still catching up to agentic AI. That gap is an opportunity. Teams that invest now with tight agent scope, strong observability foundations, and clear governance policies will build a meaningful and durable advantage.

The single most important mindset shift for enterprise data teams: stop asking "what should AI tell us?" and start asking "what should AI just handle?"

You can view the entire webinar here: The Agentic Shift: Designing AI That Can Reason, Plan, and Act.